Re: Invent - ML Inference & Silicon Dynamics

As we look to a future state of autonomous networks, it is clear that machine learning will be a critical component in that journey. Today’s AWS re:invent announcement of its new in-house designed inference chip, Inferentia, puts a spotlight on both ML inference and use-case focused silicon.

AWS claim that in the long run, most of the cost of ML will be in inference, but today, most people are focused on training costs, because that is where they are in their ML journey. There are startups in both the training and inference silicon space, which emphasizes the move away from general purpose silicon to more focused silicon. I am reluctant to call this “custom” silicon because that term has specific meaning in the silicon design area, so I will call this use-case focused silicon. I could call it application specific integrated circuits, but that term may have lost its original meaning by now. Recommendations welcome!!!

Where will Inference & Training be in the Internet?

The conventional wisdom is that training is done where the large repositories of data are, and inference is done at the point of application. This would tend to imply centralized training and distributed inference. However, I am hearing from those working in startups that there is a demand for distributed training as well. One of the demands for edge compute these days is to save on the transport of all data to centralized processing points. The cost is not just Internet/private network costs, but also the costs charged by cloud providers.

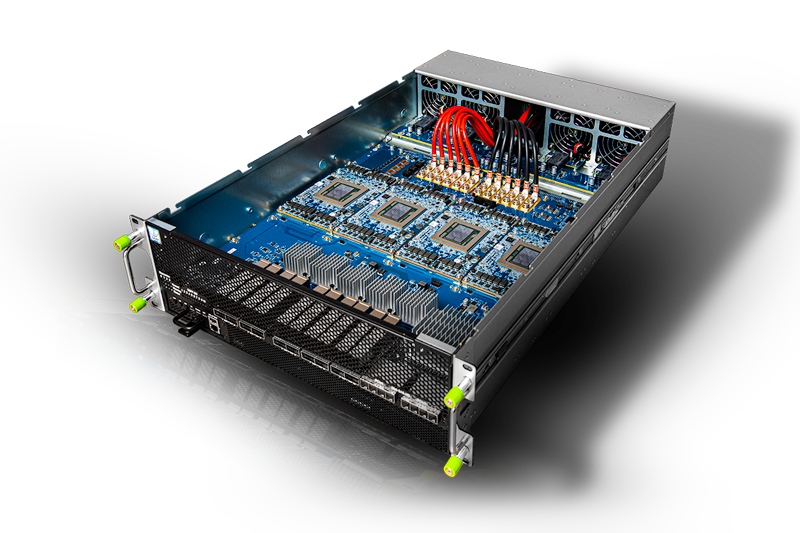

Image Source: Habana (an Intel Company). Habana HLS-1H Server.

AWS also announced today EC2 instances based on Habana/Intel’s Gaudi chip, which AWS says will have 40% better price-performance than its current GPU-based offerings. When you think about how GPUs were the accidental tourists in kickstarting the current ML revolution, you can only wonder what silicon deliberately/specifically built for ML, will do for the future of ML. Intel’s Gaudi chip also has integrated RDMA for converged Ethernet.

When you look at some of the server configurations Habana, an Intel company, are putting together, it is not hard to imagine some fairly powerful edge compute nodes doing training or inference, in a reasonably small footprint. Oh, BTW, Amazon announced today the availability of three new local zones in the US this year, and another 12 coming in 2021. For some use cases the edge will be a factory floor, for others it will be a metro area. If that does not float your boat, you can run AWS software in your own locations. As AWS head Andy Jassy says, “there are a lot of ways to bring the AWS experience to wherever customers are”. Including, and specifically, the 5G edge…which not coincidentally, is assumed by the Telecom industry, to be a virtualized edge.

What about ML in Next Gen Routers?

As the threat of Broadcom becomes more existential, you can see the ripples across the industry, not the least of which is Cisco’s Silicon One announcement this year.

There was a time when routers had so many different types of interfaces, that merchant silicon threats were kept to a dull roar. However, with the shift to Ethernet, and an emerging shift to simplified networking architectures, it has been easier for Broadcom to catch up, assisted by the scale they have achieved in cloud. The lack of growth in SP spend, a manifestation of SP revenue, is also a factor playing into the network equipment chessboard.

I do not have a strong view on how much better in-house network processors are than merchant silicon. I truly don’t, and I certainly have a high-level of respect for engineers who develop in-house silicon. However, let’s say for the sake of argument, that customers do not perceive compelling differences. When I say compelling, I mean somewhere in the range of 2 to 10 times better. What then?

If the game isn’t working for you, change the game.

Listening to AWS re:invent, I could not help but think this should have been IBM, but it wasn’t, and it isn’t. Famously, AWS was not the original Amazon mission. It is I suppose, another happy IT accident, and the emergent capability from what Amazon did to reinvent retail.

“Reimagine” is a word you hear frequently in tech. If someone has already invented something, cloning it in an incremental fashion, is often not a big win. More than likely you will be competing on price (which is ok if you have reinvented the structure of production, but not OK if you have not). Reimagine something on the other hand, any you may be creating new value, which people will pay for.

Router vendors need to reimagine the router on a similar level of change as AWS reimagined computing. Not necessarily the same model, but a significant degree of new value. It is hard to imagine ML not playing a significant role in that reimagining. Broadcom already has some “AI” tech they have announced.

Apple has been shipping its own chips in phones for many years, and have just announced their own chips for laptops. Apple has a clear problem to tackle, power and startup time. However, their chips go well beyond that. The bigger point is that tech giants are turning towards their own silicon: Apple, Amazon, Google,…Yes they have the investment capacity do many things at once. They are also though providing proof points of focusing silicon development in ways that bring value to their business.

The question is never really whether merchant silicon or in-house silicon is better. Engineering teams, given the same mission, will produce similar results, modified by talent and experience. Strategy is often about a point of focus around which self-organization occurs. The issue is often whether one or the other company reimagined the customer experience, and as a result, optimized their design for realizing that customer experience.

Conclusion

Even Intel realizes that the era of general-purpose silicon for everything has come to an end. Whether its mobile graphics performance, power, ML training, or ML inference, the number of use-case focused silicon efforts is expanding. All networking strategists have to reimagine what it means for them. Arguably, all technology leaders in all industries have to give this thought. What does the next generation router look like? Who will be the biggest chip manufacturer in ten years, Google, Amazon, Intel, Broadcom, a company that is now just a startup, someone else? Early in the new year, strategists will start putting on their thinking caps. These topics should be on the agenda.