Introduction

Marketing hype and blatant misuse notwithstanding, it was always a little bit of a storm in a tea cup. Is something Artificial Intelligence (AI) or Machine Learning (ML)? After all, ML is just one of many technologies that are part of the AI family.

The commitment to the “truth” often sees many of us arguing at the margins, which IMO, is where we are with AI & ML. It is a distinction that may matter for someone doing focused research. However, for most of us, 2022 pretty much broke through any inhibitions about using the term AI.

The technological wonders on display in 2022 gave us a glimpse of what is possible. While there may still be the need for double-clicks, explanations, and more than hand-waiving, technologists and marketeers can safely utter “AI” going forward. Some will raise the bar to Artificial General Intelligence (AGI) as the definition of AI, but realistically, AI arrived in 2022. Maybe not in a NOC near year, however, it did in terms of an overall halo throughout tech.

AI in Networking

While IT AIOps has been long discussed as the answer for operations teams managing newly complex cloud application architectures, it took a little longer to come to Networking. At the end of 2022 we can say it has arrived. Worst case, it is arriving.

Leading Network AIOps platforms now:

Use (unsupervised) machine learning for pattern matching

Correlation of multiple data sources / data points

Traversal of sophisticated multi-layer, topology-based network models

Natural language processing to understand the semantics of information

These platforms can identify patterns not apparent by looking at visualizations / monitoring dashboards, dramatically reduce the number of alerts / trouble tickets that have to be managed / actioned, identify incident root by modeling and understanding the relationships in a network, and detect new / rare information that often precede incidents (unknown, unknowns - things that rules are not yet looking for).

These platforms are not designed for only one task, they are addressing a Network Operations process with multiple AI/ML technologies to ingest multiple data sources from which noise reduced trouble-tickets are created and / or automation actions are triggered.

As an industry, we often say “needles from the haystack”. In some cases Network AIOps do what existing tools do, but better. In other cases what people are currently doing, but faster. And in yet other cases, new things that neither existing tools or people are doing. Better, faster, different.

In 2023 there will no doubt be many resources allocated to refining innovations bought to market in 2022, however, we can expect more innovation to come. Some of it will be truly network-specific, and some of it will draw on larger tech trends and AI/ML innovations.

Generative AI

Tools that did not exist one year ago: ChatGPT, Whisper, GPT-3, Codex, GitHub Copilot, InstructGPT, Text-to-product, AI slides, DALLE + API, Midjourney, Stable Diffusion, Runway videos, Email AI, AI chrome extensions, Replit Ghostwriter, No-code AI app builders. Source: Ben Tossell. (https://twitter.com/bentossell).

Wow!!! What a year. Large language models (chatGPT), text to image (Dall-E, Stable Diffusion,…), marketing copy, development environments that recommend code (Github Copilot), and more. Things are moving fast.

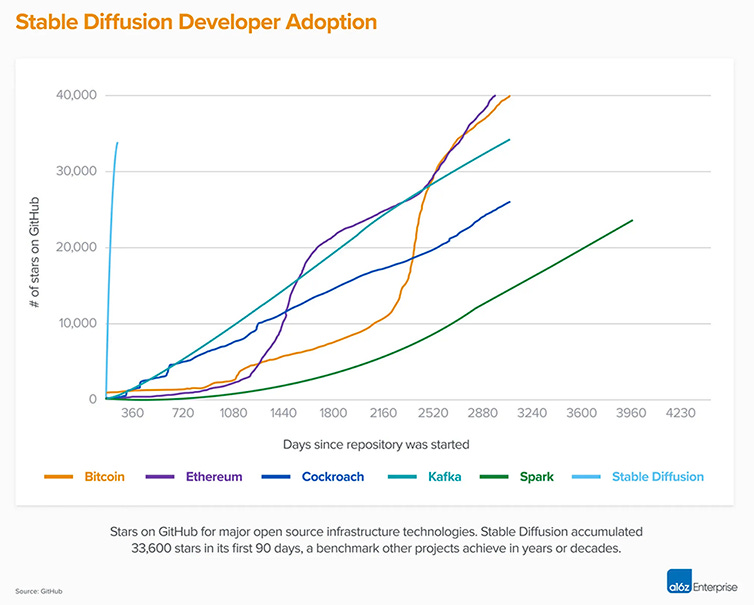

In addition, adoption is off the charts. Just consider the adoption of Stable Diffusion (a text to image capability similar to DALL-E), shown in the image below. Stable diffusion is the near vertical blue line on the far left.

Over recent years, there has been a joke that went something like “The most important skill a (software) engineer has is Google Search”. Just as most people had come to believe Google had an insurmountable moat around its business, chatGPT comes along in the last few weeks of 2022, and shines a significant amount of doubt on that assumption. The “most important” skills an engineer has might be changing before our eyes.

Is Google suddenly irrelevant? Probably not. If you want to see the world indexed in real-time, Google and other search engines are still the place to go relative to chatbots trained on historical data. The contrast between historical search/AI and real-time streaming AI is a subject close to my heart. Many people still used search engines in 2022, and many will still use them in 2023.

However, the sands are shifting for some use cases.

The Turing Test

The Turing test has historically been the standard for testing (artificial) intelligence.

“Turing proposed that a human evaluator would judge natural language conversations between a human and a machine designed to generate human-like responses”. Source, Wikipedia.

Are we there yet?

Seems like we are getting close.

Artificial General Intelligence

AI/ML can do things that humans could never dream of doing, for example finding patterns in, and correlating across, billions of streaming data points a day. AI/ML can even do some things that humans can do. However, there remain many things that humans can do that AI/ML cannot do. One concept that stands out is referred to as Artificial General Intelligence (AGI).

AGI, or “strong AI”, compares to narrow AI or “weak AI” by having general cognitive capabilities and not just focused on one task. This 6 minute video provides some insight into AGI.

Should AGI be the bar for anything being called “AI”. Personally I would say no. However, as the speaker in the above video says, talk of AGI seemed crazy 3 years ago. Today, talking of AGI seems much less crazy. Predictions of imminent AGI are now rampant on social media. The actual time frame? Well I for sure don’t know. However, rapid innovation seems likely. So for those that want to set AGI as the bar for using the term AI, that day may be much closer than we once thought.

A Word of Expectations Caution

GPT-4 is expected in 2023. How GPT-4 will be different from GPT-3 is a hotly debated subject with rumors ranging from 1,000 times more parameters to 1,000 more data trained on (see note 1). Result? Huuuuuuuuuuuuge expectations. Some tweeters are even predicting 2023 is going to make 2022 look like a sleepy year for AI. With such elevated expectations, some disappointment seems inevitable.

There is now a saying that generative AI is “generative, not creative”. That immediately points in the direction of one limitation. Other limitations will be the every day mistakes made by generative AI, an answer from chatGPT that is easily demonstrated to be wrong, for example.

The same will be true in networking. Network AIOps will miss some anomalies. Never mind that it will detect many more anomalies than current systems, and never mind that it will discard the noise created by (multiple) current systems, there will be anomalies that it misses. There will be those that point to the misses as reasons not to use Network AIOps, and there will be those that take those misses and learn from them, ever moving forward. My money is on the latter, but yes, there will be disappointments relative to expectations all over AI/ML. There are disappointments with every technology, and most humans as well.

Conclusion

Network AIOps platforms do not yet cover the full spectrum of Artificial Intelligence approaches / technologies and they are definitely nowhere near Artificial General Intelligence, for a variety of technology and data reasons. However, that is, IMO, framing the conversation in a misleading way.

There is now a general halo over all of AI/ML created by the broader AI developments in 2022, not to mention what is coming in 2023. AI in Networking is on a trajectory that creates risk for anyone remaining stuck in a frozen impression of the tech, at any given point of time - the ball is moving forward, at a good velocity.

The AI landscape changed dramatically in 2002, and with GPT-4 rumored to be released in 2003, with yet another step-function forward, we are in a period of rapid innovation. Sit on the sidelines at your own risk. We are close enough to the future now that we can safely call the technologies being used, AI, or even AI/ML if you prefer (which is what I often do).

Note: (1) Jan 12, 2023 - Early rumors on GPT-4 focused on 1,000 more parameters however social media focused has now shifted to 1,000 the amount of data it is trained on. How GPT-4 will be different than GPT-3 is a matter of speculation, for most people.

Ok, now John Robb has found the business case for buying Twitter; the AI ML stream for AIGPT will be his - before others. Like Wall Street trading algos.

https://twitter.com/johnrobb/status/1612814861465001986?s=12&t=8mejYQk3Ck92lH8sFAfo-g

Thanks!